由于CentOS8即将停止维护,所以将系统更换为了Debian,并将TensorFlow在Debian 10上重新安装一下。这里使用的是 Debian 10.11.0版本,机器显卡使用的是1080Ti。如果在CentOS8中安装,可以参考我之前写的步骤: CentOS8安装TensorFlow | OopsDump

先安装Debian 10.11.0,就不按步骤具体说明了。主要注意几点:

1. 安装时,最好选择英文。

2. 分区时,要把默认的系统分区分大一些,或者分出一个 分区 用于挂载/opt目录。

3. 建议安装Gnome桌面环境。

配置Debian

下面的操作都要使用root权限。有一点需要提醒的是,Debian中切换到root用户,要使用“su -”而不能直接用“su”, 不然$PATH中不会包含sbin目录。

关闭自动待机,在界面程序中Settings->Power->Automatic suspend = off。

远程连接的情况,还可以完全禁止休眠和睡眠:(重新开启将mask改为unmask)

# systemctl mask sleep.target suspend.target hibernate.target hybrid-sleep.target

安装完成后,进入系统,配置软件源为本地DVD文件,因为这样可以最快安装,不联网的机器不用担心漏洞问题,也减少自动升级造成的麻烦。先新建个目录 /opt/debian_dvd/ ,将DVD1-3及firmware DVD保存到/opt/debian_dvd/目录下。再在/media/目录创建几个子目录用于开机挂CD,我用了/mdeia/debian_dvd1,/mdeia/debian_dvd2,/mdeia/debian_dvd3,/mdeia/debian_dvd4。然后修改/etc/fstab,增加开机挂CD文件。

(...)

/opt/debian_dvd/debian-10.11.0-amd64-DVD-1.iso /media/debian_dvd1 iso9660 defaults 0 0

/opt/debian_dvd/debian-10.11.0-amd64-DVD-2.iso /media/debian_dvd2 iso9660 defaults 0 0

/opt/debian_dvd/debian-10.11.0-amd64-DVD-3.iso /media/debian_dvd3 iso9660 defaults 0 0

/opt/debian_dvd/firmware-10.11.0-amd64-DVD-1.iso /media/debian_dvd4 iso9660 defaults 0 0

修改/etc/apt/sources.list文件,在其它非注释行首加#改为注释,增加指定DVD为软件源:

deb [trusted=yes] file:/media/debian_dvd1 buster contrib main

deb [trusted=yes] file:/media/debian_dvd2 buster contrib main

deb [trusted=yes] file:/media/debian_dvd3 buster contrib main

deb [trusted=yes] file:/media/debian_dvd4 buster contrib main non-free

deb http://deb.debian.org/debian/ buster main contrib

deb-src http://deb.debian.org/debian/ buster main contrib

如果不想重启机器,可以手动挂载:

# mount -o loop /opt/debian_dvd/debian-10.11.0-amd64-DVD-1.iso /media/debian_dvd1 (其他类似)

更新软件源信息:apt-get update

下面就可以安装一些常用的包了:

# apt-get install vim curl build-essential git python3.7-dev python3-pip linux-headers-$(uname -r) libglvnd-dev dkms pkg-config

将当前用户加入sudo:

# vim /etc/sudoers

(加入一行当前用户)

oopsdump ALL=(ALL) ALL

对于远程的童鞋,要关闭vim的鼠标支持,不能无法用putty上右键粘贴。修改/usr/share/vim/vim81/defaults.vim,将mouse=a该为mouse-=a。

配置远程桌面

安装XRDP远程桌面:

# apt-get install xrdp

# systemctl enable xrdp

如果远程登录后是桌面空白,可以尝试:

# apt-get purge xserver-xorg-legacy

在使用的用户下:(无需root)

# echo gnome-session > ~/.xsession

如果在远程登录后,遇到提示“Authentication is required to create a color profile”,需要输入管理员密码。可以增加下面文件: /etc/polkit-1/localauthority.conf.d/02-allow-colord-oopsdump-com.conf,内容如下:(注意:将下面的{group}替换为你需要允许的用户组, {group} 可以使用video组)

polkit.addRule(function(action, subject) {

if ((action.id == "org.freedesktop.color-manager.create-device" ||

action.id == "org.freedesktop.color-manager.create-profile" ||

action.id == "org.freedesktop.color-manager.delete-device" ||

action.id == "org.freedesktop.color-manager.delete-profile" ||

action.id == "org.freedesktop.color-manager.modify-device" ||

action.id == "org.freedesktop.color-manager.modify-profile") &&

subject.isInGroup("{group}")) {

return polkit.Result.YES;

}

});

要注意的是,如果用户在图形界面没有Logout,远程是无法登录的。可以用SSH执行下面命令,强制用户注销:(DISPLAY值根据实际情况修改,可以ps -ef|grep Xorg查看带“:”的值)

env DISPLAY=:0.0 gnome-session-quit --logout

配置中文环境支持:

# dpkg-reconfigure locales

**选上zh_CN.UTF-8

**默认语言仍为en_US.UTF-8

安装中文输入支持:

# apt-get install fcitx fcitx-googlepinyin

# fcitx-configtool

**在弹出界面点左下+号

**去掉Only Show Current Language的勾

**选中最下的Google Pinyin,然后OK

**然后就可以Ctrl + Space切换输入法

安装VSCode用来编码:

# curl https://packages.microsoft.com/keys/microsoft.asc | gpg --dearmor > microsoft.gpg

# install -o root -g root -m 644 microsoft.gpg /usr/share/keyrings/microsoft-archive-keyring.gpg

# sh -c 'echo "deb [arch=amd64,arm64,armhf signed-by=/usr/share/keyrings/microsoft-archive-keyring.gpg] https://packages.microsoft.com/repos/vscode stable main" > /etc/apt/sources.list.d/vscode.list'

# apt-get install apt-transport-https

# apt-get update

# apt-get install code

安装Nvidia闭源驱动

Nvidia的显卡驱动可以在官方网站下载:https://www.nvidia.com/Download/index.aspx?lang=en-us。选择GeForce类型, GeForce 10系列,GTX 1080Ti。

也可用我的下载地址直接下载:https://us.download.nvidia.cn/XFree86/Linux-x86_64/470.57.02/NVIDIA-Linux-x86_64-470.57.02.run

先关闭原开源的Nouveau 驱动:(原因可以参考早前写的在CentOS8下安装Nviadia驱动的过程。)

# vim /etc/modprobe.d/nouveau

文件中输入:

blacklist nouveau

options nouveau modeset=0

# depmod -ae

# vim /etc/default/grub

文件尾增加:

GRUB_CMDLINE_LINUX_DEFAULT="quiet rd.driver.blacklist=nouveau nouveau.modeset=0"

# update-grub2

# update-initramfs -u

关闭系统GUI,并重启:(使用GUI仍然会自动加载nouveau)

# systemctl set-default multi-user.target

# systemctl reboot

先停止Nouveau的驱动:rmmod nouveau

安装Nvidia的闭源驱动:./NVIDIA-Linux-x86_64-470.57.02.run

恢复图形界面并重启:

# systemctl set-default graphical.target

# systemctl reboot

我发现仍然会加载Nouveau的驱动,但Nvidia的驱动已经可以加载了。这时,可以验证一下:

# nvidia-smi

Thu Nov 1 09:08:34 2021

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA GeForce ... Off | 00000000:05:00.0 On | N/A |

| 21% 38C P8 11W / 250W | 65MiB / 11176MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| 0 N/A N/A 1040 G /usr/lib/xorg/Xorg 39MiB |

| 0 N/A N/A 1070 G /usr/bin/gnome-shell 22MiB |

+-----------------------------------------------------------------------------+

安装CUDA和Cudnn

CUDA 11.4 安装按官网步骤提示即可。 也可用我下载地址: https://developer.download.nvidia.com/compute/cuda/11.4.2/local_installers/cuda_11.4.2_470.57.02_linux.run

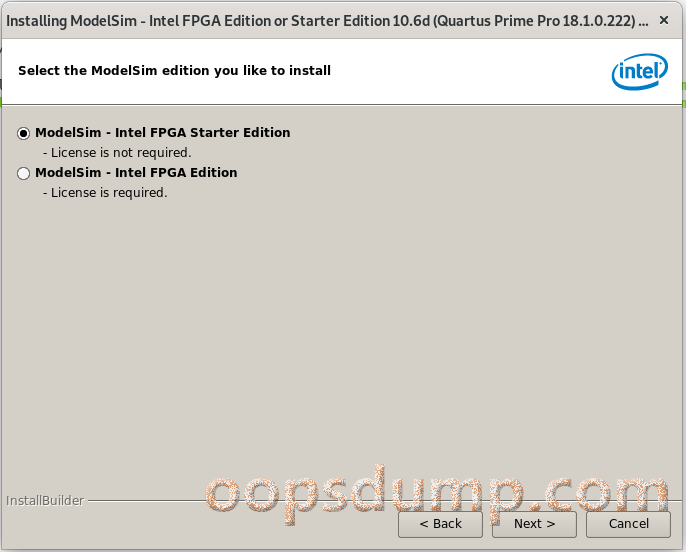

安装CUDA 11.4:./cuda_11.4.2_470.57.02_linux.run

安装时,可以不再安装驱动:(因为上面安装的一样版本的驱动)

lqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqk

x CUDA Installer x

x - [ ] Driver x

x [ ] 470.57.02 x

x + [X] CUDA Toolkit 11.4 x

x [X] CUDA Samples 11.4 x

x [X] CUDA Demo Suite 11.4 x

x [X] CUDA Documentation 11.4 x

x Options x

x Install x

x x

x x

x Up/Down: Move | Left/Right: Expand | 'Enter': Select | 'A': Advanced options x

mqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqj

验证是否安装成功:

# cd /usr/local/cuda/samples/1_Utilities/deviceQuery

# make

.....

# ./deviceQuery

./deviceQuery Starting...

CUDA Device Query (Runtime API) version (CUDART static linking)

Detected 1 CUDA Capable device(s)

Device 0: "NVIDIA GeForce GTX 1080 Ti"

CUDA Driver Version / Runtime Version 11.4 / 11.4

CUDA Capability Major/Minor version number: 6.1

Total amount of global memory: 11176 MBytes (11719409664 bytes)

(028) Multiprocessors, (128) CUDA Cores/MP: 3584 CUDA Cores

GPU Max Clock rate: 1582 MHz (1.58 GHz)

Memory Clock rate: 5505 Mhz

Memory Bus Width: 352-bit

L2 Cache Size: 2883584 bytes

Maximum Texture Dimension Size (x,y,z) 1D=(131072), 2D=(131072, 65536), 3D=(16384, 16384, 16384)

Maximum Layered 1D Texture Size, (num) layers 1D=(32768), 2048 layers

Maximum Layered 2D Texture Size, (num) layers 2D=(32768, 32768), 2048 layers

Total amount of constant memory: 65536 bytes

Total amount of shared memory per block: 49152 bytes

Total shared memory per multiprocessor: 98304 bytes

Total number of registers available per block: 65536

Warp size: 32

Maximum number of threads per multiprocessor: 2048

Maximum number of threads per block: 1024

Max dimension size of a thread block (x,y,z): (1024, 1024, 64)

Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535)

Maximum memory pitch: 2147483647 bytes

Texture alignment: 512 bytes

Concurrent copy and kernel execution: Yes with 2 copy engine(s)

Run time limit on kernels: Yes

Integrated GPU sharing Host Memory: No

Support host page-locked memory mapping: Yes

Alignment requirement for Surfaces: Yes

Device has ECC support: Disabled

Device supports Unified Addressing (UVA): Yes

Device supports Managed Memory: Yes

Device supports Compute Preemption: Yes

Supports Cooperative Kernel Launch: Yes

Supports MultiDevice Co-op Kernel Launch: Yes

Device PCI Domain ID / Bus ID / location ID: 0 / 5 / 0

Compute Mode:

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) >

deviceQuery, CUDA Driver = CUDART, CUDA Driver Version = 11.4, CUDA Runtime Version = 11.4, NumDevs = 1

Result = PASS

# make clean

...

需要手动下载Cudnn来安装: https://developer.nvidia.com/rdp/cudnn-download

下载Cudnn需要注册,如果不想注册,可以使用我下载用的地址: https://developer.nvidia.com/compute/machine-learning/cudnn/secure/8.2.4/11.4_20210831/cudnn-11.4-linux-x64-v8.2.4.15.tgz

安装方法:

# cd /usr/local/

# tar -xzvf <your save directory>cudnn-11.4-linux-x64-v8.2.4.15.tgz

# sudo chmod a+r /usr/local/cuda/include/cudnn.h /usr/local/cuda/lib64/libcudnn*

安装虚拟运行环境

安装virtualenv,来进行TensorFlow与CentOS本身包的隔离:

# pip3 install --upgrade virtualenv

创建一个公用虚拟环境:

# mkdir -p /var/venvs/

# virtualenv --system-site-packages /var/venvs/tensorflow

将下面的Diff信息修改到/var/venvs/tensorflow/bin/activate:

unset _OLD_VIRTUAL_PYTHONHOME

fi

+ if ! [ -z "${_OLD_VIRTUAL_LIB:+_}" ] ; then

+ LD_LIBRARY_PATH="$_OLD_VIRTUAL_LIBPATH_OPPSDUMP_COM"

+ export LD_LIBRARY_PATH

+ unset _OLD_VIRTUAL_LIBPATH_OPPSDUMP_COM

+ fi

# This should detect bash and zsh, which have a hash command that must

_OLD_VIRTUAL_PATH="$PATH"

- PATH="$VIRTUAL_ENV/bin:$PATH"

+ PATH="$VIRTUAL_ENV/bin:/usr/local/cuda/bin:$PATH"

export PATH

+ _OLD_VIRTUAL_LIBPATH_OPPSDUMP_COM="$LD_LIBRARY_PATH"

+ LD_LIBRARY_PATH="/usr/local/cuda/lib64:$LD_LIBRARY_PATH"

+ export LD_LIBRARY_PATH

+ export CUDADIR=/usr/local/cuda

# unset PYTHONHOME if set

当需要使用TensorFlow时,先进行:

# source /var/venvs/tensorflow/bin/activate

安装TensorFlow

安装TensorFlow:

**CPU版本

# pip3 install --upgrade tensorflow

**GPU版本

# pip3 install --upgrade tensorflow-gpu

**旧版本

# pip3 install tensorflow=={package_version}

**其他包PyTorch

# pip3 install --upgrade torch8

**如果安装tensorflow时出现h5py安装问题,可以使用下面方法:

# env H5PY_SETUP_REQUIRES=0 pip3 install -U --no-build-isolation h5py==3.1.0

测试命令:

$ python3 -c "import tensorflow as tf;print(tf.reduce_sum(tf.random.normal([1000, 1000])))"

如果遇到问题:

/usr/local/lib/python3.7/dist-packages/tensorflow/python/framework/dtypes.py:516: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint8 = np.dtype([("qint8", np.int8, 1)])

/usr/local/lib/python3.7/dist-packages/tensorflow/python/framework/dtypes.py:517: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_quint8 = np.dtype([("quint8", np.uint8, 1)])

/usr/local/lib/python3.7/dist-packages/tensorflow/python/framework/dtypes.py:518: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

(...)

降低numpy版本:

# pip3 uninstall numpy

# pip3 install numpy==1.16.4

再次运行可以成功了。